TAOBench: A Deep Dive into Meta’s Social Network Workload

Audrey Cheng · November 3, 2022

tl;dr: Meta’s social network workload contains a variety of interesting features that we capture in a new benchmark, TAOBench.

As we saw in a previous blog post, there is a crucial need for a new social network benchmark. To address this issue, we present TAOBench, the first open source benchmark that generates end-to-end, transactional requests derived from a large-scale social network. With our benchmark, we make Meta’s social graph workload accessible to the wider community and provide visibility into real-world challenges of supporting such workloads. In this blog post, we describe the benchmark and its workloads features, which are now available in open source.

TAO: Meta’s Online Social Graph Data Store

TAOBench derives its workloads from TAO, a read-optimized, geo-distributed data store that supports the family of applications used by Meta’s over 3.6 billion monthly active users. TAO’s production workload offers unique insights into the modern social network. In aggregate, the system serves over 10 billion requests per second on a changing dataset of many petabytes. While this system was initially designed to be eventually consistent, it has since added stronger consistency and isolation guarantees, including read-your-writes (RYW) consistency, failure-atomic write transactions, and read-atomic transactions.

Workload Features

In our characterization of TAO’s workload, we find a variety of interesting features, ranging from access skew to contention, that have significant performance consequences. In this post, we describe three below and more details can be found in our VLDB paper. These features are captured in TAOBench’s open source workloads.

Feature #1: Read and write hotspots tend to occur on different keys

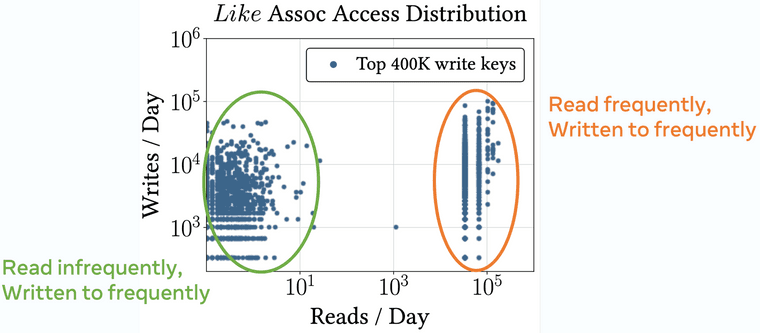

Social network workloads are known to be extremely skewed. We observe access asymmetry on TAO but find that the set of frequently accessed keys (“hot” keys) differs for reads and writes. Specifically, not all keys that are written to regularly are also read frequently. To see this, we study the read / write distribution of edges that represent the well-known “Like” reaction on Meta.

Figure 1 shows the breakdown of read and write frequency for “Like” assocs, or edges, in the social graph. Note that we look at the top 400K write keys in this graph because we find the tail does not differ significantly beyond this point. We see that there are two clusters of data: 1) keys that are read infrequently but written to frequently and 2) both read and written to frequently. An example of a key from the first cluster could be one that is an unpopular post that is written to by asynchronous jobs (data migration, processing, etc.). On the other hand, a key of the second cluster could be a post by a celebrity that is both viewed and liked often. We find that read and write hotspots can occur on different keys, even for the same key type, and these access patterns are expressed by TAOBench.

Feature #2: Hot keys tend to be on the same shards (but not in the same transactions)

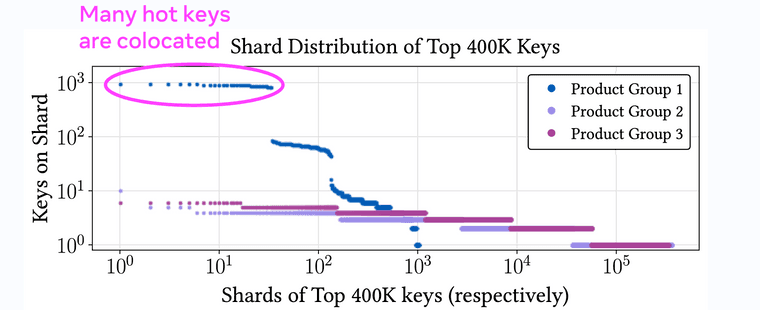

Our second feature focuses on the colocation patterns of hot keys in write transactions. Specifically, we study the shard distribution of the top 400K write transaction keys for the three main product groups on TAO (product groups represent families of applications that share product infrastructure and access the same data).

In Figure 2, we plot how many hot keys are stored on each shard. For example, the dots circled in magenta represent roughly 50 shards in Product Group 1 that each contain around 1,000 hot keys. For all three product groups, we see that many hot keys tend to fall on the same few shards. Upon further investigation, we find that these hot keys are rarely part of the same transactions. While standard practice would be to spread hot keys more evenly for load balancing, application developers have explicitly colocated many of these keys to enable more efficient batched reads when queries hit the database. These results illustrate a tradeoff between load balancing and higher performance for a subset of queries. TAOBench captures these sharding patterns, which can have significant performance consequences.

Feature #3: Transactional conflicts can often be intentional

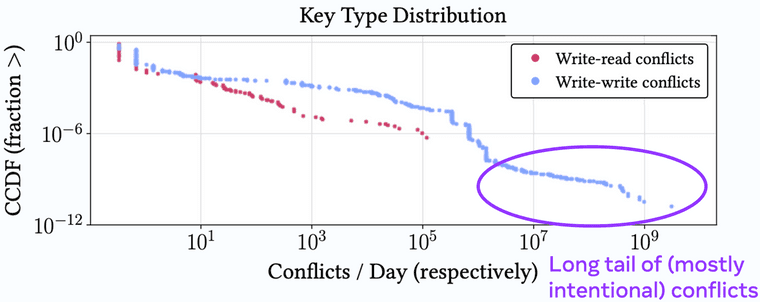

For our third feature, we study the contention distribution of transactional workloads on the social graph. We find that while most applications have few conflicts, a small portion of applications have very high contention rates. Furthermore, many conflicts are intentional—applications leverage atomicity guarantees from the system to safely send conflicting requests and expect that only one of them will succeed.

Figure 3 shows the breakdown of conflicts for transactions on TAO based on key type, which is a proxy for application use case. We show only write-read and write-write conflicts because TAO does not yet have blocking reads. The CCDF (inverse CDF) shows that while most key types generate few conflicts, there is a long tail in conflict frequency with a few applications that generate many conflicts (shown in the purple circle).

When we take a deeper look, we find 97.3% of write-write conflicts are intentional. As an example of how these conflicts can result, consider an application that needs to annotate live video into time slices. To do so, it must create edges in the social graph referring to these time slices. For timely processing, the application sends redundant inserts into the system and relies on transactional guarantees to ensure only one of these requests will succeed. Conflict errors are safely ignored by the application. In summary, intentional conflicts result in a significant portion of contention observed in these workloads and are captured by TAOBench.

Anatomy of TAOBench

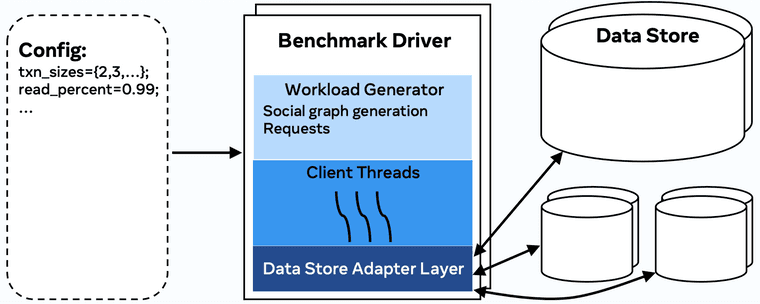

Through TAOBench, we open source a scalable, distributed framework for capturing social network request patterns (Figure 4). Notably, the benchmark provides a small set of parameters, including transaction size, key to shard mapping, and frequency of operation types, that are sufficient to replicate production workloads. We also open source several workload configurations based on these parameters that capture different request patterns on TAO, including the transactional and overall workload.

TAOBench has a simple API (reads, writes, read transactions, and write transactions) that can be easily adapted to any database. Currently, we provide support for CockroachDB, MySQL, PlanetScale, Postgres, TiDB, and YugabyteDB. The process to add support for a new system is straightforward, and we encourage potential users to reach out!

Thanks to Xiao Shi and Aaron Kabcenell for feedback on this post.