Where Are All the Social Network Benchmarks?

The Lack of Representative Workloads

Audrey Cheng · November 1, 2022

tl;dr: Despite the enduring popularity of social networks, there are few benchmarks that accurately capture the production behavior of this important application domain. To address this gap, we introduce TAOBench, a new benchmark that replicates Meta’s social graph workloads.

Social networks have been around for decades, but despite their enduring popularity, there are surprisingly few benchmarks for systems in this domain. As a result, researchers have limited tools to understand their performance, and industry practitioners face challenges in evaluating new features and optimizations. In this blog post, we lay out the challenges with existing benchmarks, and the second blog post in this series details TAOBench, which addresses the lack of representative workloads for this application domain.

Existing Benchmarks

While there are standard benchmarks for OLTP workloads, such as TPC-C, few equivalents exist for social networks. Most are derived from synthetic data and have not been shown to fully capture the skew, high correlation, and read-heavy nature of these workloads. Others lack transactions or information about colocation preferences and constraints (i.e., data may have to reside on specific shards due to legal reasons).

While there are a few existing social network benchmarks, they are limited in significant ways. We describe two of the most prominent ones below.

LinkBench derives its workload from requests of a single MySQL instance that underlies TAO at Meta.

- Based on production traces (the only benchmark we know of that is)

- Lacks graph level transactions

- Does not provide sharding information

- Captures only a small subset of the full social graph workload since it excludes all request to cache

LDBC has developed a synthetic social network benchmark that targets graph databases.

- Focuses on complex, processing-intensive queries rather than serving workloads

- Contains a limited number of query types to represent social network actions

- Does not have sharding information

Necessary Properties

As we can see, there are prominent features missing from these benchmarks. What are the properties that a social network benchmark’s workloads should satisfy? We identify five features that are essential for this application domain.

P1. Accurately emulates social network requests: Given that benchmarks are only as useful as the workloads they are derived from, it is essential that a social network benchmark accurately represents real-world systems. The only social network benchmark we are aware of that is derived from production traces is LinkBench from Meta. However, its workload is centered around the persistent storage layer, so it excludes the majority of application requests that hit cache. Other benchmarks, such as BigDataBench, focus on graph data rather than access patterns, so they may not fully express production workload patterns.

P2. Captures any transactional requirements: Transactions are a critical part of the social network workload, and previous work has demonstrated that these requests have significant performance implications. However, among existing social network benchmarks, only LDBC contains (read-write) transactions.

P3. Expresses colocation preferences and constraints: Given the rampant growth of social networks, sharding is essential to their underlying systems. For those that expose sharding to users, data placement is not simply an implementation detail but can be a reflection of user intent, privacy constraints, or regulatory compliance. As a result, data colocation patterns are a critical part of the workload because they can have significant performance consequences. To the best of our knowledge, no social network benchmark contains derived data on the sharding constraints of the supporting data store.

P4. Models request distributions without prescriptive query types: Most existing benchmarks consist of small, fixed sets of query types representing the behavior of specific applications. For example, LDBC's social network benchmark contains 29 query types meant to simulate a social network akin to Meta’s. In contrast, many large-scale, real-world platforms have a wide range of applications that exhibit changing request patterns with continued development. By representing workloads in a way agnostic to application actions by using probability distributions, we can evaluate performance without having to enumerate individual queries or replicate their code. These different strategies expose a tradeoff between isolating particular query types for understandability and modeling workloads via distributions for adaptability.

P5. Exhibits multi-tenant behavior on shared data: Most benchmarks only generate the workload of a specific application. In contrast, the distributed databases that support social networks often have multiple tenants with shared data ownership and varying transactional needs. In production, applications often access the same data and consequently affect the behavior of other products. The complex patterns that arise out of these indirect interactions are an important aspect of these workloads and should be expressed by a social network benchmark.

The Current State of the Art

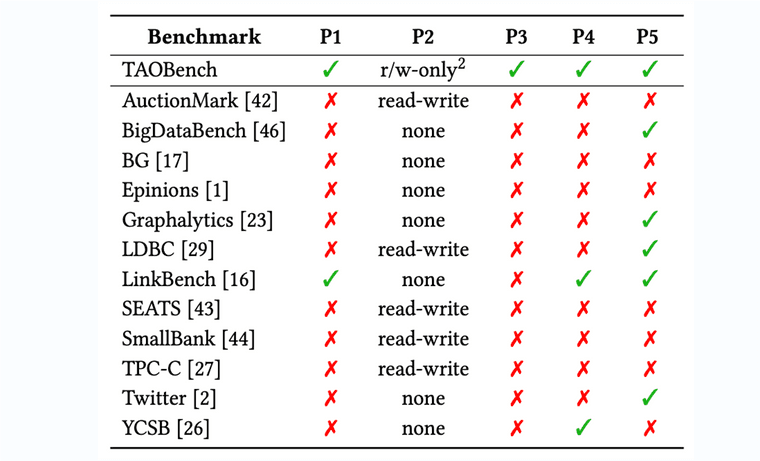

How do existing benchmarks stack up against this criteria? As we see in Table 1, no existing tool satisfies all five, leaving a gap in the available tools and data that researchers and developers have to inform system design decisions. We need a new social network benchmark that satisfies all of these properties.

To address the lack of publicly available, realistic workloads, we present TAOBench, a new benchmark based on Meta’s social network request patterns. See part two on this three-part series for details about this benchmark and interesting features we observed in Meta’s production workloads.

Thanks to Xiao Shi and Aaron Kabcenell for feedback on this post.